In our recent blogpost How to Implement and Measure First Call Resolution Effectively we concluded that in Contact Centres, the most highly rated KPI – first call resolution (FCR) – should not be construed as an indication of customer experience or happiness. It’s only an indication of efficient service – not satisfaction and loyalty.

Why measuring customer satisfaction is important

Combining FCR with metrics that measure customer satisfaction gives managers a more complete picture of customer sentiment and behaviour, and the ability to make data-driven predictions on future behaviour. It also provides the information needed to identify and prioritise changes within a contact centre (or any other customer-facing channel) to improve results.

A high FCR score does not necessarily correlate with customer happiness and loyalty. Let’s look at two scenarios:

Scenario one – resolved, but unhappy

A customer calls in to request a refund on a recent purchase and is advised that the company policy is that refunds are not provided after 10 days (and it’s been 11 days). Technically the issue was resolved on this first call, but no doubt the customer would not be happy, particularly as this was their third purchase and their first request for a refund.

Scenario two – resolution imbalance, loyalty in jeopardy

A loyal customer, who has been purchasing your services for years, calls in and enters their ID information into your IVR, is put in the queue for 15 minutes, then asked to repeat their ID details again when the agent finally answers. The agent takes 10 minutes confirming details, asking questions, getting the answers from a supervisor, and then advising the customer to go to their website and fill out a form. By this time the client has wasted 25 valuable minutes of their busy day, and the issue isn’t really resolved because they still have to take their own further action. Why didn’t the agent know the answers to the questions, and why couldn’t the agent fill out the form for them? From the agent’s perspective the issue was resolved, but not from the customer’s position, and their frustration level could make them question their decision to stay with this company.

FCR needs to viewed and measured in conjunction with some form of customer satisfaction gauge to have any true meaning and value.

Building your survey: the 5 top tips

In Contact Centres, customer satisfaction feedback is usually obtained through an IVR-based automated post-call survey, or by sending a link for the customer to complete an online form.

1. Do it quickly.

Analysts at Gartner advised that satisfaction measurement feedback collected immediately after an interaction event is 40% more accurate than feedback collected 24 hours (or later) after the event.

2. Keep surveys short and relevant.

The goal of a survey is to collect actionable information and if you take too long, the customer will abandon. Keep it short and relevant and focused on the goal – finding out what you need to do to improve your customer’s experience with you. It’s not a marketing survey.

3. Use a combination of metrics.

You will get the most value in terms of actionable feedback if you use a combination of customer satisfaction scores.

4. Use the right scale.

For most consumer surveys, a 10-point scale is standard. For IVR-based automated surveys, however, ACSI recommends a 9-point scale that utilises the phone numbers 1 through 9. With ACSI, 9-point scales are converted to 10-point and final scores converted to a 100-point scale for reporting and ‘rightmarking’.

5. Measure what matters.

Say a survey asks customers to rank a bank by “important characteristics” and one of the factors that you ask them to rank is branch locations. Having a convenient local branch is not what compels people to choose a particular bank — interest rates, fees, products, online facilities and customer service are the usual deciders. So, stick to real cause-and-effect analytics.

Integrate the customer satisfaction survey process into the Contact Centre process so it happens automatically. Use a combination of Contact Centre KPIs and customer feedback data to identify recurring patterns in issues, processes or agents. Keep team leaders and agents informed and get them involved in developing solutions.

The purpose of gathering all this valuable customer feedback is to take action to improve customer experience, build customer loyalty and increase revenue. You’ve spent the time and money to collect the data, so make use of it where it really counts. All three measurement scores can help you with this, but they should always be considered as a means to an end, the end being higher customer satisfaction.

How to measure customer sentiment

The easiest way to measure customer sentiment is through a short post contact survey. But which one? Here are some options to consider:

1. Customer Satisfaction Score (CSAT)

CSAT has been around for many years and is simple to use. It usually consists of one or more simple questions relating to satisfaction with product, service or experience, like:

CSAT has been around for many years and is simple to use. It usually consists of one or more simple questions relating to satisfaction with product, service or experience, like:

How satisfied were you with your recent product / service purchase?

How satisfied were you with the service provided by our Customer Service team today?

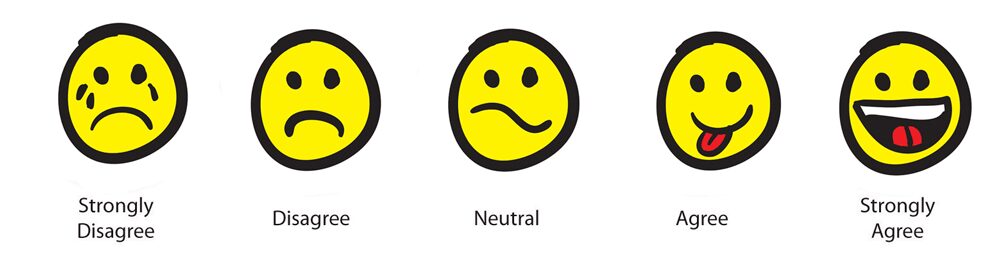

Customers are asked to rate their satisfaction to each question on a scale:

Very Unsatisfied / Unsatisfied / Neutral / Satisfied / Very satisfied

The CSAT score is the sum of respondents that answered somewhat or very satisfied. Obviously, the higher the number the higher your customer satisfaction will be.

The limitations of CSAT are that it focuses on specific interaction (support event or product) and not on the wider relationship with your organisation.

2. Net Promoter Score (NPS)

NPS was officially launched into the public realm in late 2003 in a Harvard Business Review. It is primarily a question designed to measure long-term happiness and predict customer loyalty.

NPS was officially launched into the public realm in late 2003 in a Harvard Business Review. It is primarily a question designed to measure long-term happiness and predict customer loyalty.

The question is:

How likely would you be to recommend our company to a friend or colleague?

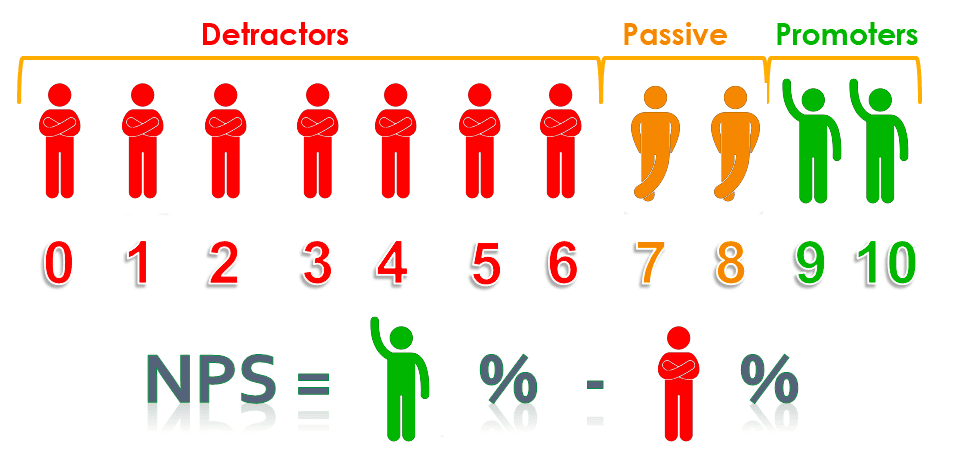

Customers are asked to choose an answer from a scale of 0 – Very Unlikely to 10 Very Likely.

People scoring 0-6 are known as detractors, those scoring 7 and 8 are called passives, whilst those scoring 9 and 10 are called promoters. The NPS is calculated by subtracting the percentage of 0-6 scores from the percentage of 9 and 10 scores to arrive at the NPS.

NPS has been shown to be more highly correlated, more often, with future revenue growth, although there is of course no proof your promoters actually will recommend you in real life. As the question is generic, it is not possible to pin-point actionable improvement areas.

3. Customer Effort Score (CES)

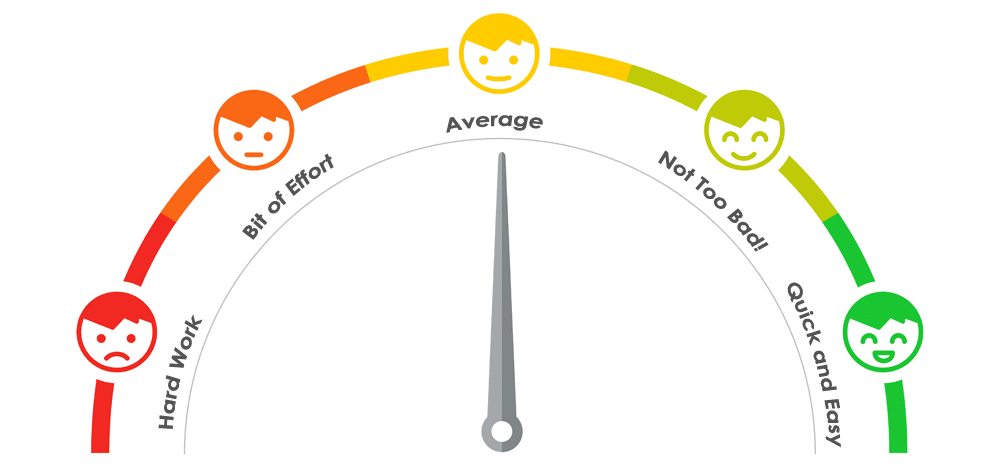

The CES score relates to how much effort the customer has to make when dealing with the organisation. The reasoning behind this metric is that you can increase customer loyalty by making it easy and quick for customers to get help and solve their issues.

The CES score relates to how much effort the customer has to make when dealing with the organisation. The reasoning behind this metric is that you can increase customer loyalty by making it easy and quick for customers to get help and solve their issues.

A typical CES metric involves asking a customer to rate their experience with a service descriptor statement, such as:

I found the self-help options made it easy for me to get the answers I needed.

Strongly disagree/ Disagree/ Somewhat disagree/ Neutral/ Somewhat agree/ Agree/ Strongly agree

When the scores are aggregated, a high average indicates that you’re making things easy for your customers, whilst a low number means that customers need to put in too much effort to get help with your company.

Whilst CES is useful for pinpointing actionable service area improvements (if the right statements or questions are asked) it doesn’t delve into why customers had any issues in the first place or what those obstacles were.

Which customer metric should you use?

When comparing each customer sentiment metric, we can conclude that each has its own applicability and limitations.

Each type of metric can stand on its own as a measurement tool, but more valuable and actionable insights will be gained from combining these into an optimised survey. The secret lies with designing questions to give you feedback and metrics that really matter – ie: ones that will help with identifying and correcting process issues, and improving results.

Premier Contact Point Makes Survey Management Easy

Our cloud based solution, Premier Contact Point, provides powerful features to facilitate the delivery, reporting and analysis of surveys. You can even design your own post-call survey with our simple-to-use design tools.